This afternoon I attended the first meeting of Measuring National Well-being Advisory Forum. Most of the people there were from Government agencies or statistical bodies so it was good that the RSA was invited to make a contribution. Between now and April the Office of National Statistics is conducting a public conversation around the Prime Minister’s intention to measure well-being as a key national statistic and there are plans afoot for the RSA to host some part of that conversation.

There were many fascinating points made ranging from the challenge of undertaking international comparisons to the relationship between wellbeing today and longer term resilience. Being a bit of a nerd and a lot of a sceptic about opinion polling, I particularly enjoyed points (Chatham House rules stop me attributing them) about the vagaries of measurement. For example, there is the impact of focussing on an issue and therefore prompting a response. If you ask people whether they enjoy their car (prompting them to recall their decision to buy it) there is a reasonably strong correlation with the amount they paid. If, however, you ask about how much they enjoyed commuting to work in the car there is no correlation. Another great point concerned the relationship between experiential questions (‘how was today/ yesterday for you?’) and evaluative ones (‘how satisfied are you overall with your life?’). Apparently if you ask the former before the latter it reduces the average satisfaction score by nine points, which- in this context - is a huge and confounding difference.

An issue which spurred me to speak concerned the way the media will report the outcomes of a top line life satisfaction question. A Government advisor told us that in a big data set (such as the Integrated Household Survey) the average is unlikely ever to move by more than about two points, say, between 73% and 75% satisfaction. The danger is that the top line statistic is seen to be useless in terms of casting any light on anything.

My intervention was to confirm the importance of framing the findings and getting it right from the start. I well remember the largely futile attempts we made in Government to get journalists to report the reliable British Crime Survey rather than much less reliable - but usually more alarming and newsworthy - reported crime stats.

A key question here may be whether we focus on the average or on key thresholds. Think here of the Labour Force Survey: it does generate a figure for the average hours worked but this is rarely quoted. Instead people focus on three key thresholds: full time employment, part time employment and unemployment. Presumably the same thing could happen with a life satisfaction measure. The press and public might not be very concerned about small movements in the average but there would surely be much more interest in the aggregate number of happy people (say, those over 80% on the satisfaction measures) and those categorised as unhappy (say, below 60%).

Another part of the conversation focussed on how the public would respond to the whole process. As someone said, the thing with subjective measures is that it allows people to choose to say they are more unhappy than they are just to cock a snook at an unpopular Government. Also the public may become even more sceptical if top line satisfaction figures are used to legitimise political or policy claims (in the NHS, patient satisfaction ratings at an all-time high co-existed with a large minority of people saying the NHS was in crisis).

There was then much agreement with the idea, first that well-being statistics should be seen not just as Government data but as a public resource (for example telling us which job or location choices might make us most content) and, second, that the conversation with the public about what to measure and about what we should draw from the data should move beyond the preparatory stage into continuing oversight.

At the end we were all asked to use our own channels to provoke further debate and to direct people toward ONS’s own website.

Job done

Related articles

-

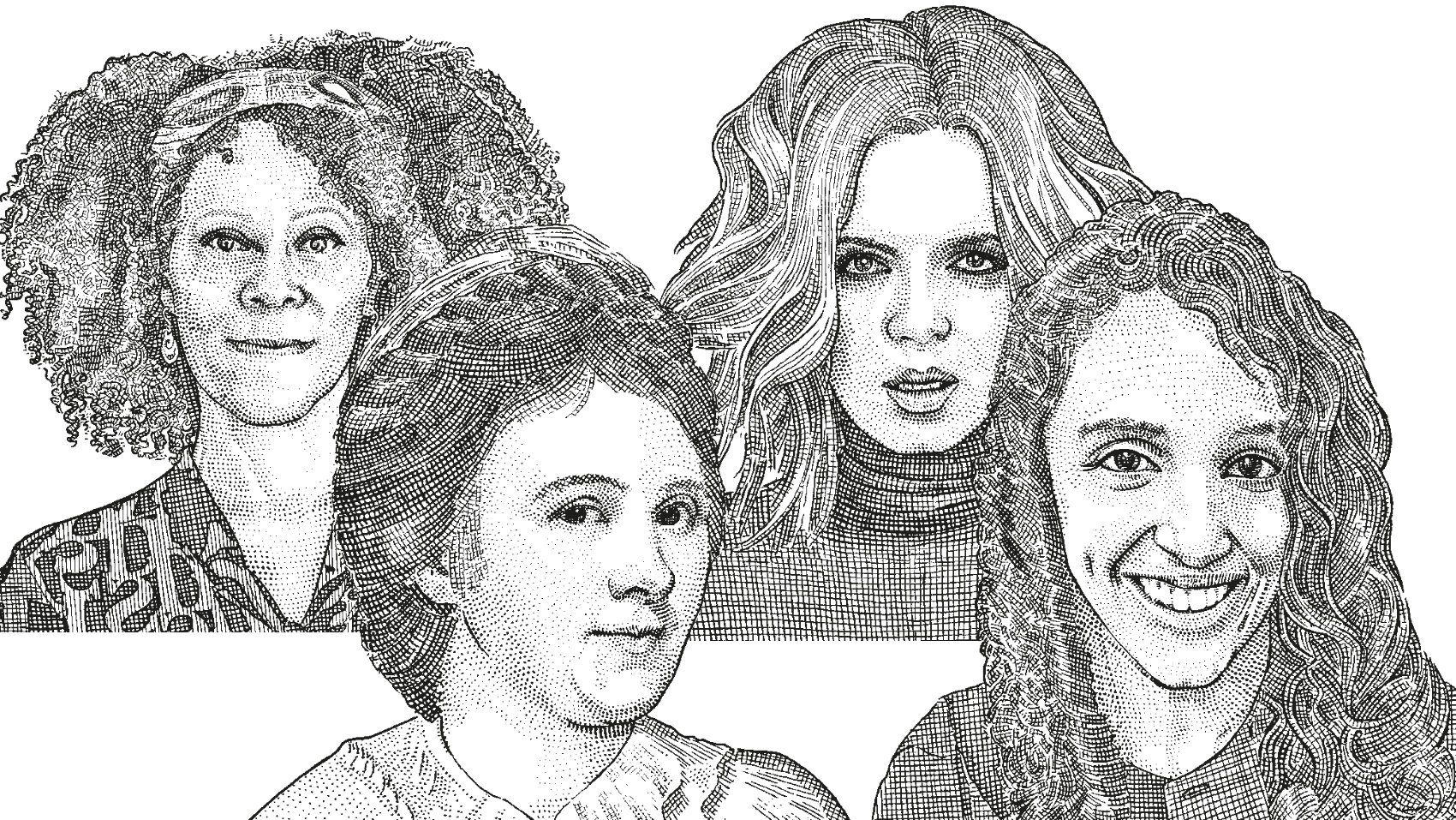

Nine famous female Fellows inspiring inclusion

Dean Samways

International Women’s Day 2024 invites us to imagine a world where all genders enjoy equality. Where prejudice and discrimination no longer exist. This is the world our work is helping deliver to this and future generations.

-

Fellows Festival 2024: changemaking for the future

Mike Thatcher

The 2024 Fellows Festival was the biggest and boldest so far, with a diverse range of high-profile speakers offering remarkable stories of courageous acts to make the world a better place.

-

Inspired by nature

Rebecca Ford Alessandra Tombazzi Penny Hay

Our Playful green planet team summarises a ‘lunch and learn’ at RSA House that focused on how the influence of nature can benefit a child’s development.

Be the first to write a comment

Comments

Please login to post a comment or reply

Don't have an account? Click here to register.