In 2013, Oxford University estimated that 47% of all jobs could be automated by 2030. More recently, OECD researchers have suggested that just 9% of all jobs are at risk. McKinsey Global Institute have estimated 5% and PwC 30%. But why do they get such different results?

How do these predictions work?

The 2013 Oxford University study provides the blueprint. Other studies either build on this, or replicate its methodology. Frey and Osborne used the O∗NET, an online database of US job descriptions, to develop a machine learning algorithm that estimates the ‘probability of computerisation’ for different occupations. The algorithm was fed with 70 occupations, which were labelled in the following ways:

- AI experts assigned 1 to those which they agreed were fully automatable, else 0. This was aided by answering: “can the tasks of this job be sufficiently specified, conditional on the availability of big data, to be performed by state of the art computer-controlled equipment”.

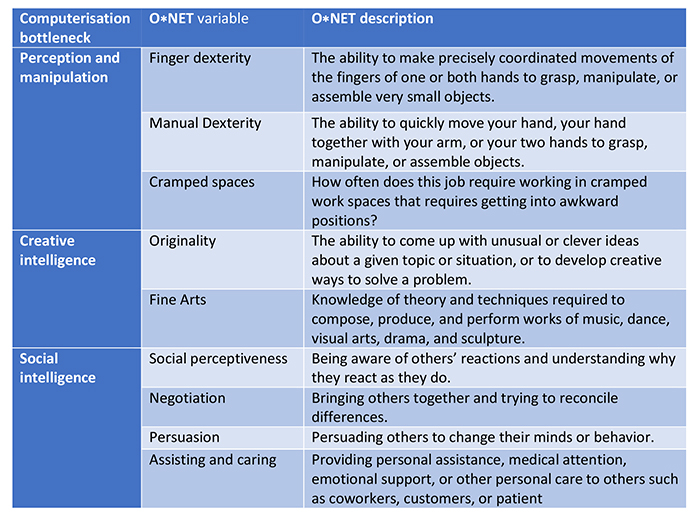

- Frey and Osborne assigned scores corresponding to the levels of engineering bottlenecks. Table 1 provides more detail here; perception and manipulation, creativity, and social intelligence are all considered more robot proof. So one might expect that jobs which involve performing open heart surgery, composing music or looking after children are safe career choices.

Figure 1: O∗NET variables that serve as indicators of bottlenecks to computerisation (adapted from Frey and Osborne, 2013)

The ‘probability of computerisation’ then, indicates the likelihood of an occupation being automated, based on these inputs. Frey and Osborne estimate that 47% of jobs in the US are at high risk of being automated within 10 to 20 years. Transferring this methodology to UK data generates an equally daunting 35% of jobs.

However this is not where the story ends. There are at least 4 points of contention with other studies, which give rise to a wide range of different estimations.

Automation mostly threatens tasks not whole jobs

The OECD study by Arntz, Gregory and Zierahn was motivated by a sensible criticism. Automation aims at certain tasks, rather than whole occupations, and by taking an occupation-based approach, Frey and Osborne don’t account for the fact that different jobs within an occupation vary considerably in their task make-up. Junior consultants spend more time doing admin and less time advising clients than more senior consultants. Retail assistants at Selfridges spend more time persuading customers than retail assistants at Tesco. However in both examples, these are the same occupations with the same O∗NET descriptions. And so they are given identical risk ratings by Frey and Osborne.

Arntz, Gregory and Zierahn’s task-based approach uses the OECD’s Survey of Adults Skills (PIAAC), which contains individual-level data on the task composition of jobs. By transferring Frey and Osborne’s findings to more granular data, and using this to estimate the automatability of workplace tasks, they overcome this criticism. Critically, their predictions are based on the task composition of jobs both across and within occupations.

Job disruption will be more common than unemployment

The OECD study estimate is less daunting: across 21 OECD countries, only 9% of jobs are at risk of automation. The potential for automating entire occupations is said to be much lower because certain parts of these ‘bundles of tasks’ are very difficult to automate. Take retail assistants, which Frey and Osborne estimate are highly automatable yet only 4% perform their jobs without social interaction.

Arntz, Gregory and Zierahn predict that more jobs are likely to experience radical change than be automated. In the UK, this is 25% and 10% respectively. Radical change is likely when 50-70% of tasks are automatable; automation when >70%. So, under both estimations, 35% of the UK workforce will have to adapt significantly. The actual disagreement here is over the extent of technological unemployment, not the number of jobs ‘disrupted’ by AI and robotics.

Small changes in models make big differences to final predictions

In what might bring the debate full circle, PwC offer a technical criticism of the OECD study. PwC agree with their substantive objection but suggest their model over-estimates the difference a task-based approach makes. In an appendix, they show that by using a different set of predictive features, with improved performance metrics, the results more closely match the occupation-based approach. PwC estimate that 38% of US and 30% of UK jobs are at high risk of automation.

How the figures are framed matters

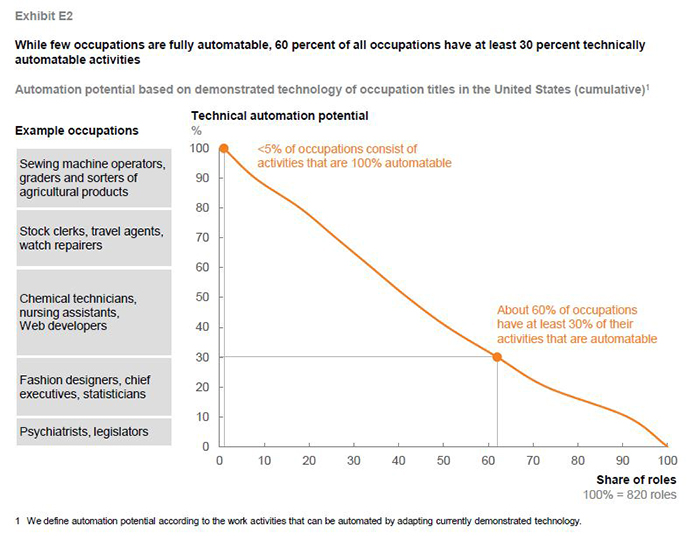

McKinsey’s task-based approach appears to corroborate the OECD study. They use the same data and a similar methodology to Frey and Osborne but disaggregate occupations into tasks and capabilities before getting input from experts. The result: less than 5% of all occupations are candidates for full automation.

However the way this is framed makes it not strictly comparable with the other studies, which all adopt 70% as a threshold for being at high risk. McKinsey report their findings at a threshold of 100%. This makes the 5% figure misleading. Especially as their model suggests around 30% of jobs are 70% automatable, a figure similar to PwC.

Figure 2: Cumulative automation potential based on demonstrated technology and US occupations (MGI, 2017)

Should we be worried?

It appears that a loose consensus is starting to emerge. These studies agree that around 30% of jobs that will be significantly impacted by AI and robotics but disagree over whether they will be fully automated, or remain intact, albeit with a very different task composition.

As I argue elsewhere, there are some reasons we can stop worrying (for now). This is because there are a wide range of human factors, which will determine the pace of adoption, or act as brakes, preventing robots from even entering certain sectors of the economy.

This blog is the first in a two-part series on how we predict, and prepare for, the impacts of automation. You can read the second blog here.

Related articles

-

8 ideas for a new social contract for good work

Alan Lockey Fabian Wallace-Stephens

Alongside the moral urgency of the pandemic, the challenges of growing economic insecurity and labour market transforming technologies require a new social contract for work.

-

Low pay, lack of homeworking: why workers are suffering during lockdown

Fabian Wallace-Stephens Will Grimond

New RSA analysis finds that those least able to work from home are often the lowest paid.

-

Four futures of skills and learning in Scotland

Fabian Wallace-Stephens

What skills will workers need in the future? And how should educators, employers and policy makers respond to shifts in the labour market? We worked with Skills Development Scotland to explore these questions.

Be the first to write a comment

Comments

Please login to post a comment or reply

Don't have an account? Click here to register.